LEXISTEMS' Sensible.ai® technology is the applied science that powers our Sensible Solutions.

Its mission: to enable MEANING in any software stack.

"Composable solutions that understand people and data"... Delivering on this commitment requires a new approach to information processing and a framework of world-class Natural Language and Artificial Intelligence components. That, in short, is LEXISTEMS' Sensible.ai technology.

Based on 12+ years of R&D and co-developments with advanced Labs in the fields, Sensible.ai processes everything by MEANING. With meaning, the traditional limitations of keywords or programmed intents disappear. Meaning makes Sensible.ai handle requests the way we humans think, and speak. With all our defaults, variations, beauty and complexity.

Technically, Sensible.ai is a pipeline of granular workers that can be freely composed and extended for use case specificity. To achieve end-to-end meaning-based processing, these workers belong to one of four global categories:

RESULTS‑DRIVEN INNOVATION

These solid foundations allow Sensible.ai to be permanently augmented with in-house R&I and select advances in open source contributions and worldwide research. For instance, we use our own transformers / reformers for use case-specific transfer learning out of proprietary generic + vertical language models.

Building on our own purpose-oriented models is also how we equal or beat State-of-the-Art in e.g. Speech recognition or Document summarization, as shown in published metrics and demo use cases (see the SensibleSpeech and SensibleSummaries pages).

Another illustration of Sensible.ai's uniqueness is that it doesn't rely solely on statistical approaches like most AI shops do. Bringing in probabilistic methods, for example, helps Sensible.ai achieve higher Bayesian conditional probability in disambiguation, which just translates into better understanding - especially in context management.

GREEN CODE AND ECO‑RESPONSIBILITY

Coming from the HPC community, we know pretty well how efficient programming results in less hardware and energy requirements at the core, edge and cloud levels. That is why Sensible.ai systematically implements HPC best practices -- the only ones capable of making exaflops supercomputers a sustainable reality.

Sensible.ai's internal architecture itself results from the assessment of applicative and energy efficiency. This has great impact on how derived applications and models behave energy-wise: most of them can run on low-consumption devices at the edge, users' time to information (therefore processing and API calls) is reduced, and storage needs are minimized.

To help our customers reach their climate neutrality objectives, Sensible.ai-powered applications include their own carbon footprint reports for overall training and typical inference. In production, they optionally return actual carbon metrics with every query result.

DATA SECURITY + USER PRIVACY

As a framework of components, Sensible.ai actively enforces data security and user privacy from end to end.

It guarantees that all data and processes remain within customers' infrastructures, are shielded from public access, and use secured network protocols. No 3rd-party services, no external clouds of dubious jurisdiction. As shown in the diagram below, this is by design.

The benefit is plain and simple: no risk of data leak or predation.

Sensible.ai-powered applications give customers total control on the security of their data and the privacy of their end users -- in processing, storage and transport.

Which makes them totally compliant with every GDPR-type regulations, today and tomorrow.

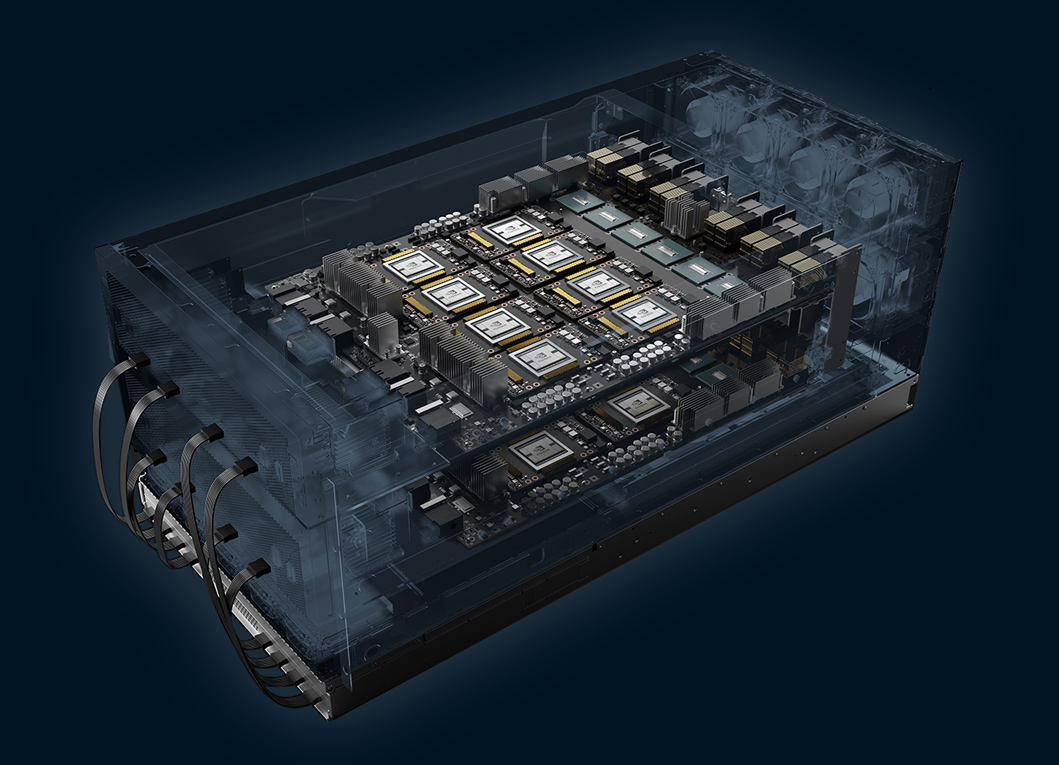

HARDWARE AND SOFTWARE AGNOSTICISM

Sensible.ai's components and models are developed and trained either in-house, on partners' compute infrastructures (like EDF / Exaion's Noé) or on Top-500 HPC clusters (like GENCI's Jean Zay) depending on the workload.

They run indifferently on CPUs, GPUs or specialized derivatives like APUs, TPUs and FPGAs.

Sensible.ai-powered applications can be deployed as backend APIs (cloud) or embedded within devices (edge). Backend APIs are delivered as container images orchestrable on any infrastructure including 100% on-premise. Migration paths from cloud to partly or totally edge are available by design.

Sensible.ai is equally UI-agnostic, and therefore compatible with any frontend / middleware stack and components. It includes IIFEs that make granular data comsumption in UIs a delicious piece of cake.

(Image courtesy NVIDIA Corp.)

LANGUAGES OF CHOICE

Depending on the task, Sensible.ai uses programming languages that warrant maximum performance and easy integration of customers plugins for specific / sensitive jobs. Sensible.ai exposes connectors everywhere, so LEXISTEMS need not see what's going on in customers' proprietary code.

Backend-wise, Python means rapid assimilation of research libraries that can later be customized, and cythonized or ported for production speedups up to 50x. Rust is standard for mature components, giving them near-assembler speed and memory security. So is NVIDIA CUDA for GPU / parallel acceleration.

Data-wise, Sensible.ai applies (and contributes to) Open Data standards such as JSON-LD, RDF, OWL(-DL), SPARQL and Turtle, on universal, language-agnostic schema.org-derived ontologies. In English: no proprietary formats, no breaking changes and total reusability / extensibility of all data-driven efforts.

Frontend-wise, javascript flavors are used throughout Sensible.ai's codebase for universal adaptation to any UI.